Match Group's AI Play: Personalisation or Profit Over People?

- Match Group controls approximately half the global dating market through properties including Tinder, Hinge, Match.com, and OkCupid

- The company deploys AI systems that analyse swipe patterns, message response rates, and dozens of behavioural signals to determine profile rankings and visibility

- Match Group serves tens of millions of paying subscribers whose romantic opportunities are shaped by proprietary algorithms with no external oversight

- The company does not publish details about how its AI balances match quality against engagement metrics that drive subscription renewals

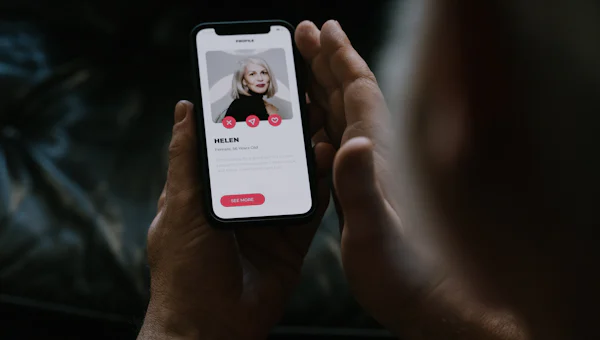

Match Group has spent the past two years talking up its AI capabilities to investors whilst keeping the specifics conveniently vague. That's starting to change. According to Anukool Mishra, the company's Director of Analytics, Match is now deploying artificial intelligence across its portfolio to 'hyper-personalise' dating experiences and monitor safety behaviours across platforms including Match.com, OkCupid, and its newer niche properties.

What Mishra calls an AI system that works 'within their preferences and limits as an extension of their own dating approach' is, in practice, algorithmic curation that increasingly determines who meets whom across roughly half the global dating market. The implications go well beyond product development. Match Group's portfolio serves tens of millions of paying subscribers globally.

When one company's AI architecture shapes romantic possibility at that scale, questions about whose interests that technology actually serves become urgent business questions—not just ethical ones.

Create a free account

Unlock unlimited access and get the weekly briefing delivered to your inbox.

This is the algorithmic power conversation the dating industry has successfully avoided for years, dressed up in the palatable language of personalisation.

Match Group controls enough market share that its AI design choices—how profiles are ranked, which behaviours trigger safety flags, what constitutes an 'optimal' match—effectively set norms for digital dating itself. The real story isn't that Match is using AI. It's that the company now wields enough consolidated power to make unilateral architectural decisions about romantic opportunity for a substantial portion of single adults, with minimal external oversight and incentives that don't obviously align with helping users leave the platform.

What hyper-personalisation actually means

Mishra's framing positions AI as an assistant that enhances user agency. The technical reality is more complicated. Machine learning systems trained on behavioural data don't simply execute user preferences—they interpret, weight, and often override them based on predicted outcomes.

When Match Group discusses personalisation, it's describing systems that analyse swipe patterns, message response rates, time spent on profiles, session duration, and dozens of other signals to determine which profiles appear in a given user's queue and in what order. The stated goal, according to Mishra, is to surface matches that align with both explicit preferences and inferred intent.

That becomes problematic when the company's commercial incentives favour extended engagement over quick exits. Match Group's business model depends on subscription renewals and in-app purchases. An AI system optimised purely for successful relationships—defined as matches that lead users to delete the app—would cannibalise revenue.

This tension isn't theoretical. Internal documents from other social platforms have repeatedly shown that engagement optimisation often wins when it conflicts with user welfare. The dating industry has provided no evidence that its algorithms are designed differently.

Match Group does not publish details about how its AI systems balance match quality against engagement metrics. The weighting is proprietary. Users have no visibility into why they're shown certain profiles and not others, or how the queue they see differs from what the platform could show them.

The safety monitoring question

Match deploys what Mishra describes as AI-powered safety monitoring to identify problematic behaviour across its platforms. This includes analysing message content, flagging suspicious account patterns, and monitoring interactions for signs of harassment or fraud. On paper, this sounds like responsible platform governance.

In practice, dating apps' safety records suggest these systems either aren't working or aren't being resourced adequately. Match Group brands have faced lawsuits alleging failures to prevent assaults linked to platform matches. Journalists have repeatedly demonstrated how easily scammers evade detection systems. Trust and safety staffing levels across the industry remain low relative to user bases.

The question isn't whether AI can assist with safety monitoring—it obviously can. The question is whether Match Group is deploying these capabilities at the scale and sensitivity required, or whether safety AI serves primarily as liability management theatre whilst the company focuses its technical resources on engagement and monetisation.

Dating platforms benefit from positioning safety features prominently in public statements whilst keeping the uncomfortable details—prevalence rates of harassment, response times to reports, accuracy of detection systems—private.

Market concentration and algorithmic monoculture

Match Group's portfolio strategy means its AI architecture will likely propagate across multiple brands. The company has historically centralised technology development, with platform-specific customisation layered on top of shared infrastructure. Efficiencies of scale make this rational from an operating margin perspective.

The consequence is reduced diversity in how algorithmic matching works. When Tinder, Hinge, Match.com, OkCupid, and a dozen other properties all draw from the same core AI systems—even if implementation details vary—the dating market becomes an algorithmic monoculture. The same biases, the same optimisation targets, the same architectural choices get reproduced across brands that users perceive as competitors.

This matters because algorithmic dating already exhibits documented bias problems. Studies have shown that matching algorithms can amplify racial preferences, disadvantage users who don't fit conventional attractiveness standards, and entrench existing social hierarchies rather than disrupting them. When one company's approach dominates, there's limited competitive pressure to address these issues.

Bumble and Grindr operate their own AI systems, but their combined market share is a fraction of Match's. Smaller independents lack the data scale and technical resources to compete on algorithmic sophistication. That leaves Match Group's choices about what 'fair and transparent AI systems'—Mishra's phrasing—actually look like functionally unchallenged.

What operators should watch

Dating apps have avoided the algorithmic accountability conversation that social media platforms now face routinely. That won't last. Regulatory frameworks including the EU Digital Services Act (DSA) and the UK Online Safety Act (OSA) create precedents for requiring platforms to explain how recommendation systems work and demonstrate that they're not causing harm.

Dating operators should expect increased pressure to provide algorithmic transparency—not just aspirational statements about responsible AI, but specific disclosures about what signals their systems use, how they weight user welfare against engagement, and what safeguards prevent discrimination. Match Group's scale makes it a likely early target for regulatory scrutiny or shareholder activism on these questions.

The competitive angle is equally significant. If Match Group's AI architecture becomes a regulatory liability or public relations problem, competitors with more transparent or demonstrably user-aligned approaches gain differentiation. That creates an opening for challengers, particularly in markets where Match's dominance is less entrenched.

For investors tracking MTCH, the AI discussion matters because it touches both growth potential and risk. Personalisation capabilities could improve conversion and retention if done well. But the combination of market concentration, misaligned incentives, and growing public scepticism about algorithmic curation creates meaningful downside scenarios that earnings calls don't address.

- Expect regulatory pressure for algorithmic transparency to intensify as dating apps face the same scrutiny as social media platforms under frameworks like the EU Digital Services Act

- Match Group's market dominance creates competitive opportunities for challengers who can credibly demonstrate more user-aligned AI systems and transparent matching practices

- The structural tension between engagement-optimised algorithms and successful relationship outcomes represents an under-discussed risk factor for MTCH investors

Comments

Join the discussion

Industry professionals share insights, challenge assumptions, and connect with peers. Sign in to add your voice.

Your comment is reviewed before publishing. No spam, no self-promotion.